You must log in or # to comment.

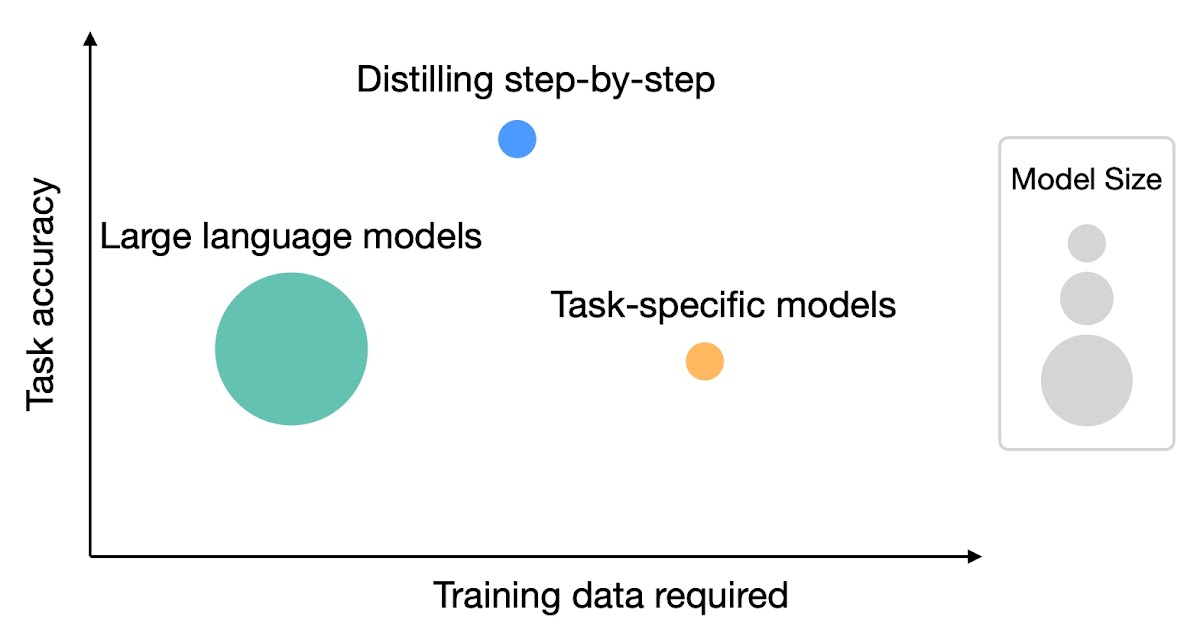

Woah this is pretty interesting stuff, I wonder how practical it is to do, I don’t see a repo offering a script or anything so may be quite involved but looks promising. Anything to reduce size while maintaining performance is huge at this time

The code is available here:

Somehow this is even more confusing because that code hasn’t been touched in 3 months, maybe just took them that long to validate? Will have to read through it, thanks!